My research lies at the intersection of robotics and machine learning, with a focus on reinforcement learning. I study how to learn policies that work in real-world robotic systems and how to improve data efficiency by leveraging policy ensembles in large-scale training settings.

Rethinking Policy Diversity in Ensemble Policy Gradient in Large-Scale Reinforcement Learning

ICLR 2026 To Appear (Poster)

Scaling reinforcement learning to tens of thousands of parallel environments has motivated ensemble-based policy gradient methods that use multiple policies to collect diverse samples. However, simply increasing exploration can degrade exploration quality and harm training stability.

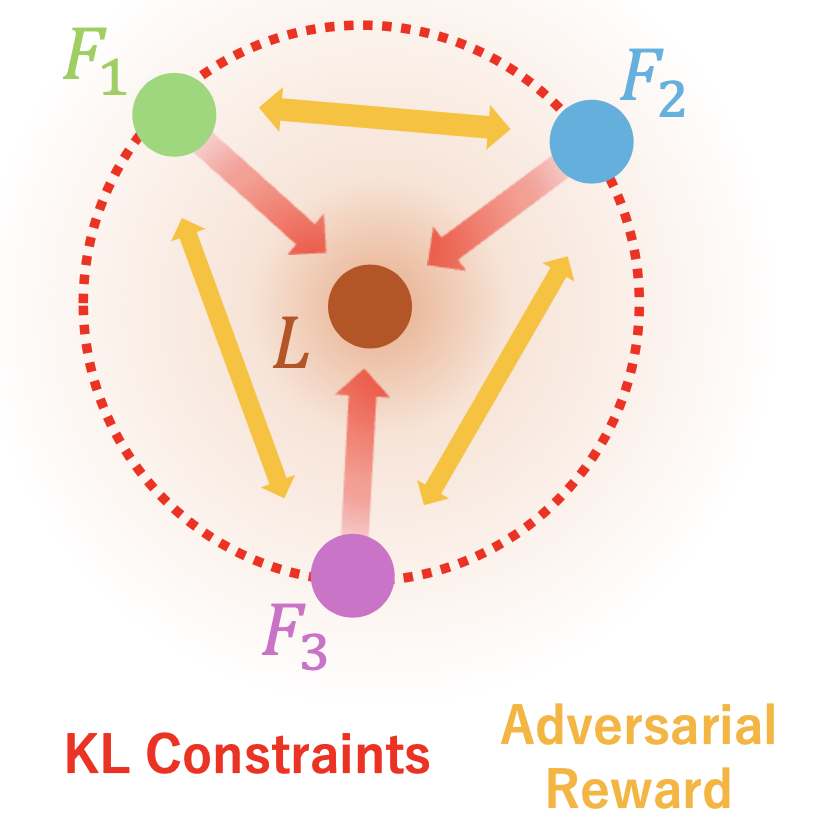

In this work, we theoretically analyze the role of inter-policy diversity and propose Coupled Policy Optimization (CPO), which regulates the leader-follower misalignment using KL constraints. CPO enables follower policies to explore the neighborhood of a leader policy and outperforms strong baselines across multiple dexterous manipulation tasks in both sample efficiency and final performance. Our analysis further clarifies how leader–follower divergence and KL constraints influence learning dynamics.

Naoki Shitanda, Motoki Omura, Tatsuya Harada, Takayuki Osa. "Rethinking Policy Diversity in Ensemble Policy Gradient in Large-Scale Reinforcement Learning." International Conference on Learning Representations (ICLR), 2026 (To Appear, Poster).

[project page] [Arxiv] [OpenReview] [lab page] [RIKEN AIP news]